More transistors = more production failure probability so a 1024 FP32 GPU could be more probably produced than a 512 FP64_flexible GPU. ALso making 2xFP32 out of a FP64 would need more transistors than pure FP64, more heat, more latency maybe.

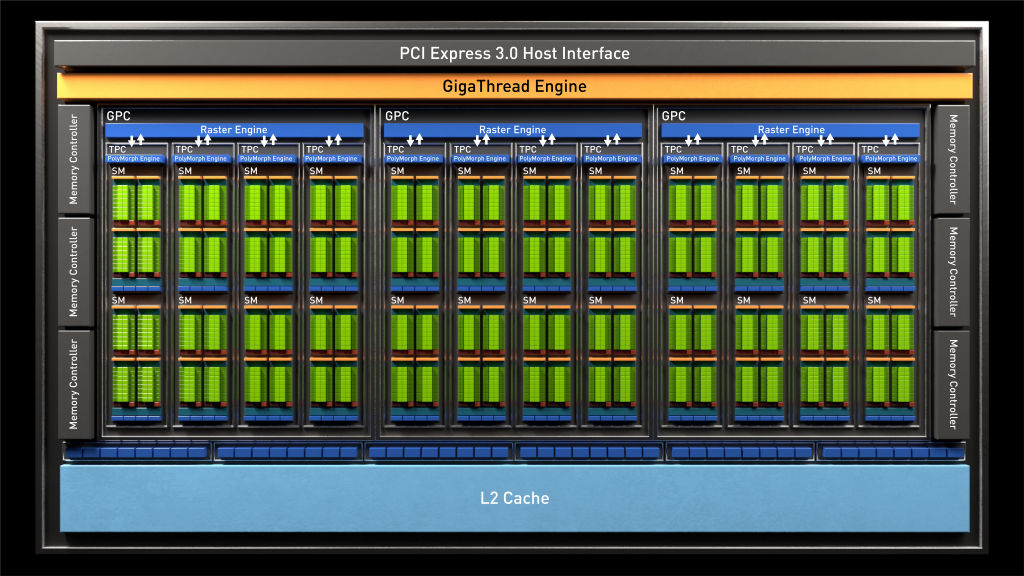

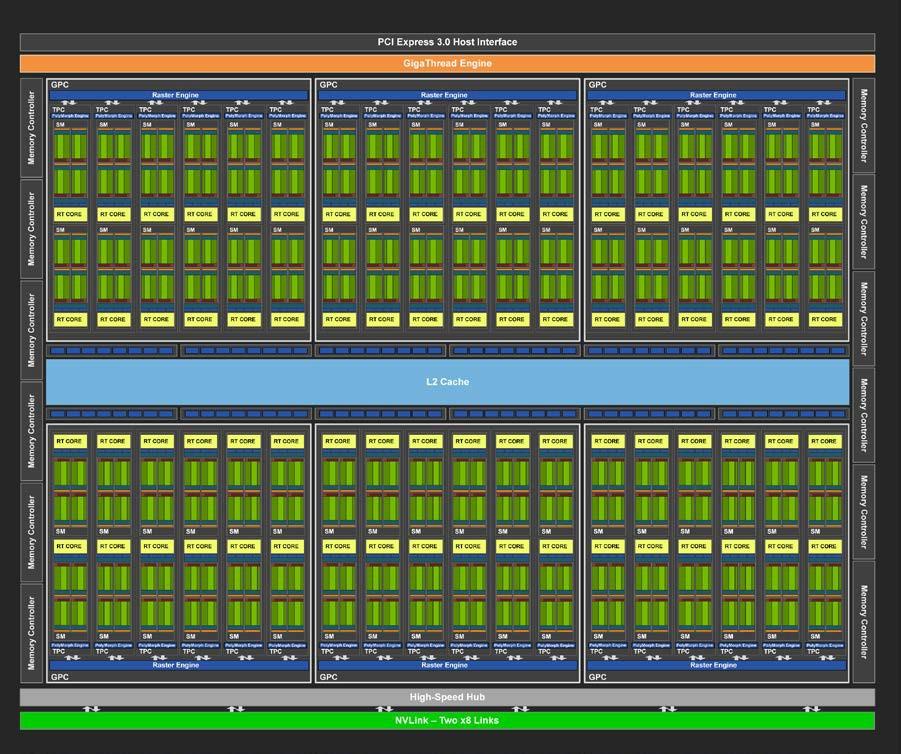

SIMD expects always same operation for multiple data and less fun for scalar GPGPU kernels. Per instruction (like the SIMD instruction sets in CPUs). Why not just put FP64 units that are capable of performing 2xFP32 operations But now, money talks and it says "fixed function for now" and best income is achieved with a mixture of FP64 and FP32 (and FP16 lately). For example, I may need more multiplications than additions and FPGA could help here. This would benefit for people doing many different things on a computer. Ultimately, I wouldn't say no for an FPGA based GPU that can convert some of cores from FP64 to FP32 or some special function cores for an application, then converting all to FP64 for another application and even converting everything to a single fat core that is doing sequential work(such as compiling shaders). Who knows, maybe in future there will be FP_raytrace dedicated cores that do raytracing ultra fast so no more DX12 DX11 DX9 painful upgradings and better graphics. If there were only FP32, a neural network simulation would work at half speed or some FP64 summation wouldn't work. Without FP32, game physics and simulations would be very slow or GPU would need a nuclear reactor. #TURING FP64 SOFTWARE#Without FP64, scientific research guys can't even try a demo of scientifically important gpgpu software that uses FP64(and even games could be using some double precision on an occasion). I think its about market penetration, to sell as many as possible. Why did Nvidia put both FP32 and FP64 units in the chip?

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed